What is Hadoop and why is it useful for data analysts to know how to work with it?

Table of Contents

What is Hadoop?

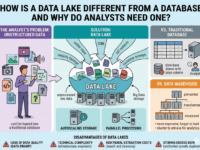

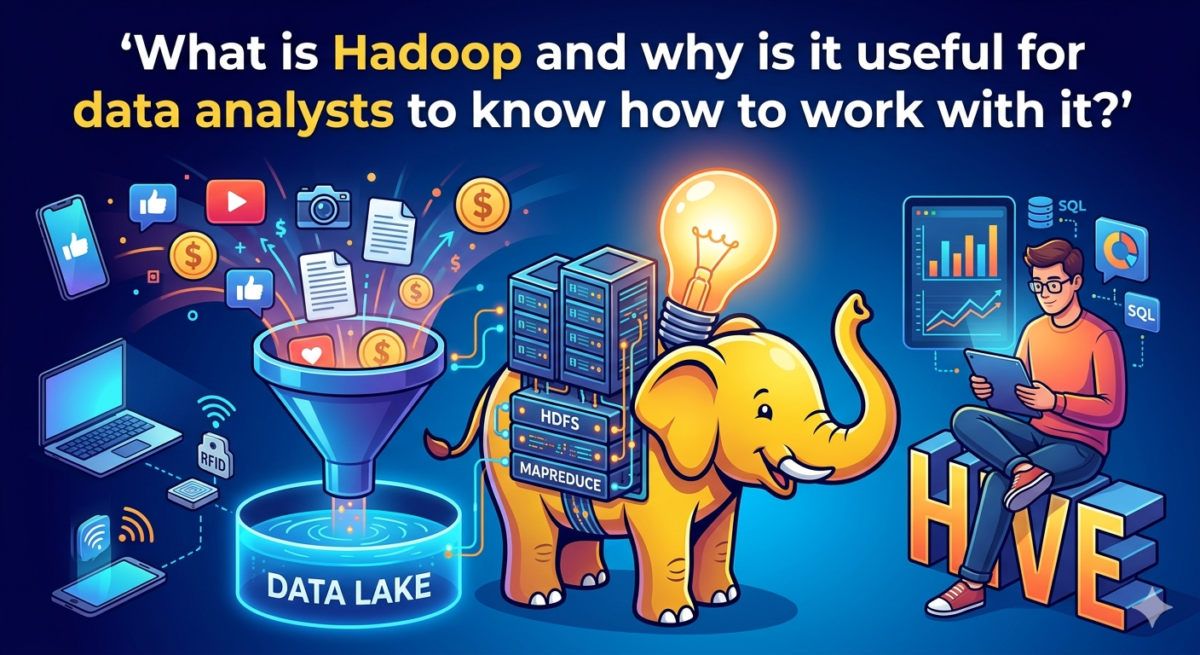

Apache Hadoop is a set of tools for building a big data system. It is designed for collecting, storing, and processing hundreds of terabytes of continuously arriving information. It is the foundation for building data lakes —large-scale repositories that store unstructured information for future analytics.

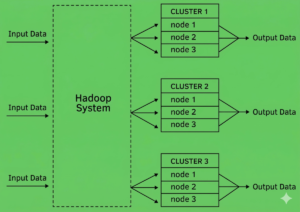

Hadoop operates on the MapReduce principle, which is the distribution of data. Its essence is that the system consists of clusters—groups of individual subservers, or nodes, that are used as a single resource. When a large task is submitted to a cluster, Hadoop divides it into many smaller subtasks and executes each on its own node. This allows for parallel processing of multiple tasks and faster delivery of the final result.

Hadoop is an open-source product that can be used completely free of charge and modified for specific business needs. Let’s look at a few examples of such tasks:

● A social network needs to store user correspondence. This represents millions of files daily, containing text, emoji, video, images, and audio—heterogeneous and unstructured information. All of this can be stored in Hadoop storage, so it can be retrieved for analytics or use as needed.

● A company that handles payment acquiring needs to store transaction information. Transactions have a single format, but there are 20 million of them per day, and they happen so fast that they simply can’t be recorded in databases. They can be stored in a Hadoop-based data warehouse—it’s quite fast.

● A large distributed food delivery service sends millions of orders per day across the country. Order information is stored in a database and is critical to the proper operation of the application. This same data needs to be analyzed to improve the application’s performance, so it can be stored in Hadoop storage and used for analytics, bypassing the database and without compromising the application itself.

Data analysts rarely work directly with Hadoop storage—this requires in-depth knowledge of the system’s architecture and algorithms, which is more commonly held by data engineers. Typically, analysts access Hadoop add-ons, such as Hive tables, and extract data from there. They then analyze it using specialized tools—a skill you can learn in the “Data Analyst” course.

How Hadoop Came to Be

People have been working with data since the advent of the first computers. However, for a long time, the volumes of data were not critical, and classic databases and established technologies were sufficient for processing.

Over time, data volumes began to grow, and so did the demands on processing speed. In 2005, a new concept emerged: MapReduce. Software developers Doug Cutting and Mike Cafarella decided to build a software infrastructure for distributed computing based on this concept. The project was named after the toy elephant of one of the founders’ children, which is where the logo came from.

Just a year later, Yahoo, then still quite large and well-known in the IT industry, expressed interest in the project. In 2008, they launched a search engine based on Hadoop technology. Hadoop thus became the top-level project of the Apache Software Foundation. That same year, a world record for data sorting performance was set: a cluster of 910 nodes processed 1 TB of data in just 209 seconds. The technology immediately attracted interest from Last.fmFMFacebook*, The New York Times, and Amazon cloud services.

Hadoop gradually evolved, acquiring new technologies and modules, and becoming increasingly reliable and performant. An entire Hadoop ecosystem emerged, complete with tools to facilitate data management.

Hadoop is still used by many companies to build data warehouses and data lakes, although it is gradually becoming a thing of the past. It is being replaced by new technologies related to local data centers and big data processing in the cloud.

Hadoop Ecosystem Architecture

There are four main modules within the Hadoop project:

1. Hadoop Common.

A set of tools used to create infrastructure and work with files. Essentially, it serves as a control system for other modules and as a connection point for additional tools.

2. HDFS (Hadoop Distributed File System).

A distributed file system for storing data across multiple nodes. It has a built-in data redundancy system to ensure reliability and integrity even if individual servers fail. It stores unstructured data that requires specialized tools and resources to retrieve, not just through queries.

3. YARN (Yet Another Resource Negotiator).

A Hadoop cluster management system that allows applications to utilize computing power.

4. Hadoop MapReduce.

A platform that performs MapReduce computations, i.e., distributes input data across nodes.

The Hadoop system also has numerous additional components, such as:

● Hive.

A data store that allows you to query large data sets from HDFS and create complex MapReduce jobs. It uses the HQL query language, which is similar to SQL. This is the data store that data analysts typically work with.

● Pig.

A data transformation tool that can prepare data in various formats for future use.

● Flume.

A tool for receiving large data sets and sending them to HDFS. It can collect large streaming data sets, such as from logs.

● Zookeeper.

A coordinator that helps distribute information across different nodes more efficiently.

Functions of technology

Hadoop is needed to:

● Increase data processing speed using the MapReduce model and parallel computing.

● Ensure data resilience by storing backup copies on other nodes.

● Work with data of any type and kind, including unstructured data, such as video.

Due to these capabilities, Hadoop is used in the following practical tasks:

● Creation of a data lake – an inexpensive and convenient storage for unstructured information.

● Processing data from social networks – they are often unstructured, arrive in large volumes, but allow you to obtain as much information as possible about clients.

● Analysis of customer behavior, collecting information about their actions on the site and in the store, which is needed to make forecasts based on this.

● Risk management, log analysis, and response to failures and security breaches. Logs are typically a large stream, and other storage solutions cannot handle them.

● Processing data from the Internet of Things – sensors placed on various devices.

Where and why are Hadoop components used?

Hadoop is used for storing and analyzing big data. It is essential for companies that work with big data, including large retailers, social media, manufacturing, logistics, healthcare, and high-tech startups.

Examples of Hadoop use in various business sectors:

Retail. Collecting data on sales and customer behavior on the website. Collecting and storing product information: assortment, popularity, and inventory levels. Using this data, develop loyalty programs, personalized offers, promotions, and purchasing plans. Increasing sales.

Manufacturing. Storing information from equipment sensors. Predicting maintenance intervals, setting product prices, and reducing production costs.

Banks. Financial information and risk analysis. Fraud detection. Customer behavior prediction.

Hospitals. Storing medical data, which is almost always unstructured. Improving profitability, evaluating treatment effectiveness, and identifying disease risk factors.

In addition to Hadoop, this requires other tools for big data analysis, graphing, and neural network training, as well as qualified specialists who can work with them— data analysts and data science specialists.

Expert advice

Moses Gaspar

For those working as data analysts or planning to become one, Hadoop won’t be of much use at the start. Not all companies have Hadoop-based warehouses, and even those that do, they’re typically managed by other specialists, such as data engineers. Learning this technology over time will be beneficial—it will enhance the professional’s standing with employers and allow them to advance their career.