For about the last six months, the publisher has been actively exploring the topic of quantum computing and its practical applicability. We struggled to find a suitable article to translate on this fascinating topic for a long time, until one appeared on the Oracle blog. This publication will serve as an excellent introduction to the software, hardware, and purely natural science issues of this new paradigm, so it’s a must-read.

Interest in quantum computing has grown significantly in recent months and years. New publications from research institutes, companies, and government agencies are constantly appearing, reporting breakthrough achievements in this field. Meanwhile, articles with a less technical basis speculate on the potential implications of quantum computing, with predictions ranging from breaking most modern encryption techniques to promising cures for all diseases and the completion of full-fledged AI. However, not all of these expectations are equally realistic.

If you’re a practicing, sober-minded programmer, you might be wondering where the line between fact and fiction lies in these calculations, and how quantum computing will impact software development in the future.

Naturally, we’re still many years away from the creation of working quantum computing hardware. However, the general principles of this paradigm are already understood, and abstractions exist that allow developers to create applications that utilize quantum computing capabilities using simulators.

Is quantum computing just another CPU boost?

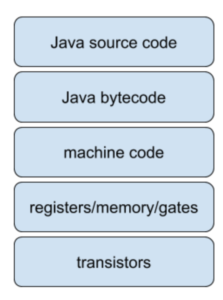

Traditional software development using classical computers involves translating a high-level programming language (such as Java) into operations performed on a large number of (hardware) transistors.

Figure 1 schematizes this process in its simplest form: Java source code is compiled into platform-independent bytecode, which is in turn translated into platform-specific machine code. The machine code uses a series of simple operations (gates) executed in memory. The primary hardware component used for this purpose is the well-known transistor.

Fig. 1. Translating a high-level programming language into operations performed on transistors.

The performance gains achieved in recent years have been achieved primarily through improvements in hardware technology. The size of a single transistor has shrunk dramatically, and the more transistors that can be packed into each square millimeter, the more memory and computing power a computer will have.

Quantum computing is a disruptive technology because its simplest computing units are qubits, which we’ll discuss below, rather than classical transistors.

This is not only due to the differences between these basic elements, but also to the different design of the gates. Therefore, the stack shown in Figure 1 does not apply to quantum computing.

Will quantum computing destroy the entire above stack down to the Java level?

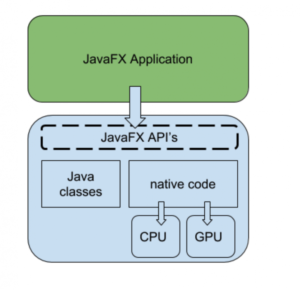

The short answer is “not quite.” Scientists are gradually concluding that quantum computers will be particularly good at solving specific problems, while other tasks are more efficiently handled by traditional computers. Sound familiar, right? A similar situation occurs when comparing GPUs and CPUs. While GPUs also use transistors, they operate differently from CPUs. However, many applications written in high-level languages leverage both CPU and GPU capabilities under the hood. GPUs are very good at vector processing, and many applications and libraries separate the CPU and GPU workflows.

For example, this is precisely the situation when using JavaFX or Deeplearning4j. If you write a user interface application using JavaFX, you’re working exclusively with Java code (and maybe also with FXML for UI declarations). When a JavaFX scene needs to be displayed on the screen, the JavaFX implementations use shaders and textures to do so, directly communicating with the low-level GPU drivers, as shown in Figure 2. Therefore, you don’t have to worry about which parts of your code are better suited for the CPU and which for the GPU.

Figure 2. JavaFX Delegates Work to the GPU and CPU.

As shown in Figure 2, the JavaFX implementation code delegates work, passing it on to the GPU and CPU. Although these operations are hidden from the developer (not exposed through the API), some knowledge of the GPU is often useful when developing more performant JavaFX applications.

A similar situation arises with Deeplearning4j. Deeplearning4j provides a number of implementations for performing the required vector and matrix operations, some of which utilize the GPU. However, as an end developer, you don’t care whether your code uses the CPU or the GPU.

Quantum computers appear to be excellent at solving problems that typically grow exponentially as the problem grows in size, and are therefore difficult or nearly impossible to solve using classical computers. Specifically, experts are discussing a hybrid execution scenario: a typical end-to-end application contains classical code running on a CPU, but it may also contain quantum code.

How can a system execute quantum code?

Today, quantum computing hardware remains highly experimental. While large corporations and, presumably, some governments are developing prototypes, such technology is not widely available. But when it does appear, it could take various forms:

- A quantum coprocessor can be integrated with the CPU in a system.

- Quantum tasks can be delegated to quantum cloud systems.

While there remains considerable uncertainty about the practical implications of such solutions, we are increasingly reaching agreement on what quantum code should look like. At the lowest level, the following building blocks should exist: qubits and quantum gates. These can be used to create quantum simulators that implement the expected behavior.

Therefore, a quantum simulator is an ideal tool for such development.

The results it produces should be virtually identical to those obtained on real quantum computer hardware—but the simulator runs much slower, since the quantum effects that accelerate quantum hardware must be simulated using traditional software.

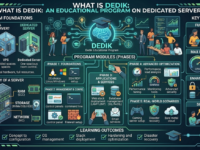

What are the basic building blocks of quantum computing?

It is often important to compare classical computing with quantum computing. In classical computing, we have bits and gates.

A bit contains a single digit of information, and its value can be either 0 or 1.

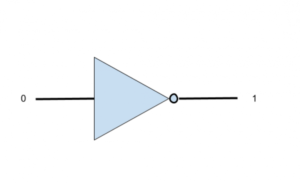

A gate acts on one or more bits and can manipulate them. For example, the NOT gate shown in Figure 3 reverses the value of a bit. If the input is 0, the output of the NOT gate will be 1, and vice versa.

Fig. 3. NOT gate

In quantum computing, we have the equivalents of bits and gates. The quantum equivalent of a bit is a qubit. The value of a qubit can be either 0 or 1, like a classical bit, but it can also be in what is called a superposition. This is a difficult-to-imagine concept, according to which a qubit can simultaneously be in both states: 0 and 1.

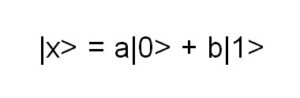

When a qubit is in superposition, its value is a linear combination of the 0 and 1 states. This can be written as shown in Figure 4:

Fig. 4. An equality where a qubit is in a superposition.

Note that qubits are often written in bra-ket notation, where the variable name is placed between the “|” and “>” symbols.

The expression in Figure 4 says that qubit x is in a superposition of the states |0> and |1>. This does not mean that it is in state |0> OR state |1>; it means that its current state is unknown to us.

In fact, it is in both states simultaneously and can be manipulated as such. However, when we measure the qubit, it will be in one state, either |0> or |1>. There is another constraint in the expression above: a^2 + b^2 = 1.

The values of a and b are probabilistic: there is a probability a^2 that when we measure qubit |x>, it will contain the value |0>, and a probability b^2 that the measured qubit will contain the value |1>.

There’s a key limiting factor that curtails the joys of quantum computing: once a qubit is measured, all information about the potential superposition it was in is lost. A qubit’s value can be either 0 or 1.

During calculations, a qubit in superposition can simultaneously correspond to 0 and 1 (with varying probabilities). If we have two qubits, they can represent four states (00, 01, 10, and 11), again with varying probabilities. This is where we get to the heart of the power of quantum computers. With eight classical bits, we can represent exactly one number between 0 and 255. The value of each of the eight bits will be either 0 or 1. With eight qubits, we can simultaneously represent all numbers from 0 to 255.

What is the use of superposition if only one state can be measured?

Often, the algorithm’s outcome is simple (“yes” or “no”), but arriving at it requires a massive amount of parallel computation. By keeping qubits in superposition during computations, any number of different options can be considered at once. Without having to solve for each combination, a quantum computer can calculate all options in a single step.

Then, in many quantum algorithms, comes the next crucial step: linking the algorithm’s outcome to a measurement that yields a meaningful result. This often involves interference: interesting results constructively overlap, while uninteresting ones cancel each other out (destructive interference).

How can a qubit be “transformed” into a superposition state?

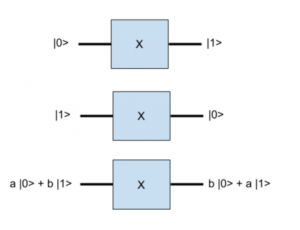

Just as classical gates manipulate bits, quantum gates manipulate qubits. Some quantum gates resemble classical gates; for example, the Pauli-X gate transforms a qubit from the state a|0> + b|1> to the state b|0| + a|1>, which is similar to the operation of the classical NOT gate. Indeed, when a = 1 and b = 0, the qubit was initially in the state |0>. After applying the Pauli-X gate, this qubit will transition to the state |1>, as shown in Figure 5.

Figure 5. The result of applying the Pauli-X gate.

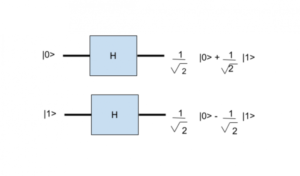

The Hadamard gate is of particular interest in this context. It transforms the qubit from the state |0> into a superposition: 1/sqrt(2)* (|0> + |1>), as shown in Figure 6.

Figure 6. The result of applying the Hadamard gate.

After we apply a Hadamard gate to a qubit and measure the qubit, there is a 50% chance that the qubit’s value will be 0 and a 50% chance that the qubit’s value will be 1. Until the qubit is measured, it remains in a superposition state.

How is all this possible?

If you’re truly interested in the answer to this question, you’ll need to delve into quantum physics. Fortunately, however, understanding the entire theoretical basis of these phenomena isn’t necessary. While the phenomenon of superposition may seem incomprehensible, it’s important to emphasize that these very properties are characteristic of elementary particles in nature. Consequently, quantum computing is much closer to the foundations of physical reality than it initially appears.

Should we wait a few years before looking at quantum computing?

No. In that case, you’ll be late. Theoretically, it’s possible to first develop the hardware and then move on to software research to see what can be achieved with it. However, all the concepts are now more or less clear, and quantum simulators can already be written in popular languages, including Java, C#, Python, and others.

These simulators can then be used to develop quantum algorithms. Although these algorithms won’t provide the same performance boost that would be achievable with real quantum hardware, they should be fully functional.

Therefore, if you’re developing a quantum algorithm now, you have time to perfect it, and you can launch it when quantum hardware becomes available.

Quantum algorithms require a different intellectual approach than classical ones. Outstanding scientists began developing quantum algorithms back in the last century, and now more and more papers are being published describing such algorithms, including those for integer multiplication, list searching, path optimization, and much more.

There are other reasons why it might be worth pursuing quantum computing today. Refactoring a software system in a modern, large company isn’t something that can be accomplished overnight. However, one area where quantum computing will truly revolutionize is encryption, which is based on the theory that it’s virtually impossible to factor large integers into prime numbers on a classical computer.

While it may be many years before quantum computers are large enough to easily solve integer factorization, developers know that it takes many years to both modify systems and implement new, more secure technologies.

How can one learn to work with quantum algorithms in Java?

You can download and try out Strange, an open-source quantum computer simulator written in Java. Strange simulates a quantum algorithm by creating a series of qubits and applying several quantum gates to them.

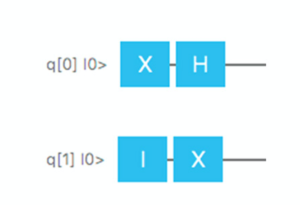

As a simple example, let’s create two qubits, q[0] and q[1], so that they are both initially in the 0 state. Then, apply two simple gates to each qubit, so that the operation graphically corresponds to Figure 7.

The first qubit will first be fed to a Pauli-X gate, then to a Hadamard gate. The Pauli-X gate will take it from the |0> state to |1>, and the Hadamard gate will put it into a superposition with equal probabilities of |0> and |1>. Therefore, if we execute the entire sequence 1,000 times and measure the first qubit at the end of this cycle 1,000 times, we can expect, on average, that it will have a value of 0 in 500 cases and a value of 1 in 500 cases.

The second qubit is even simpler. We start with the Identity gate, which does not change the qubit’s behavior, and then pass it to the Pauli-X gate, which changes its value from 0 to 1.

Fig. 7. An example of a quantum algorithm that can be simulated using Strange.

To verify the correctness of our reasoning, we can create a simple quantum program using Strange.

public static void main(String[] args) {

Program p = new Program(2);

Step s = new Step();

s.addGate(new X(0));

p.addStep(s);

Step t = new Step();

t.addGate(new Hadamard(0));

t.addGate(new X(1));

p.addStep(t);

SimpleQuantumExecutionEnvironment sqee = new SimpleQuantumExecutionEnvironment();

Result res = sqee.runProgram(p);

Qubit[] qubits = res.getQubits();

Arrays.asList(qubits).forEach(q -> System.out.println("qubit with probability on 1 = "+q.getProbability()+", measured it gives "+ q.measure()));

}This application creates a quantum program with two qubits:

Program p = new Program(2);This program goes through two stages. In the first stage, we apply the Pauli-X gate to q[0]. We don’t apply the gate to q[1], thus implying that it will work with the Identity gate. We add this stage to the program:

Step s = new Step();

s.addGate(new X(0));

p.addStep(s);Then we move on to the second step, where we apply the Hadamard gate to q[0] and the Pauli-X gate to q[1]; we add this step to the program as well:

Step t = new Step();

t.addGate(new Hadamard(0));

t.addGate(new X(1));

p.addStep(t);So, our program is ready. Now let’s run it. Strange has a built-in quantum simulator, but it can also use a cloud service to run programs on a cloud service, such as Oracle Cloud.

In the following example, we use a simple built-in simulator, run the program, and obtain the resulting qubits:

SimpleQuantumExecutionEnvironment sqee = new SimpleQuantumExecutionEnvironment();

Result res = sqee.runProgram(p);

Qubit[] qubits = res.getQubits();Before we measure the qubits (and lose all information), let’s display the probabilities. Now we measure the qubits and look at the values:

Arrays.asList(qubits).forEach(q -> System.out.println("qubit with probability on 1 = "+q.getProbability()+", measured it gives "+ q.measure()));Running this application yields the following output: Note that the first qubit can also be measured as 0, as expected. If you run this program multiple times, the first qubit’s value will, on average, be 0 half the time and 1 half the time.

qubit with probability on 1 = 0.50, measured it gives 1

qubit with probability on 1 = 1, measured it gives 1

Is that all you need to know about quantum computing?

Of course not. We haven’t touched on several important concepts here, including entanglement, which enables interaction between two qubits even when they are physically very far apart. We haven’t discussed the most famous quantum algorithms, including Shor’s algorithm, which enables factoring integers into prime factors. We’ve also ignored several mathematical and physical facts, including that in the superposition |x> = a|0> + b|1>, both a and b can be complex.

However, the main goal of this article was to give you an overview of quantum computing and understand how it fits into the future of software development.